Artificial intelligence (AI) models are becoming increasingly sophisticated and powerful, able to perform a wide range of tasks from generating text to translating languages to driving cars. One of the most popular AI models is ChatGPT, which is known for its ability to generate human-quality text.

But how do AI models like ChatGPT work? How do they store information and learn? In this article, we will explore the inner workings of AI models and answer these questions.

Data Storage

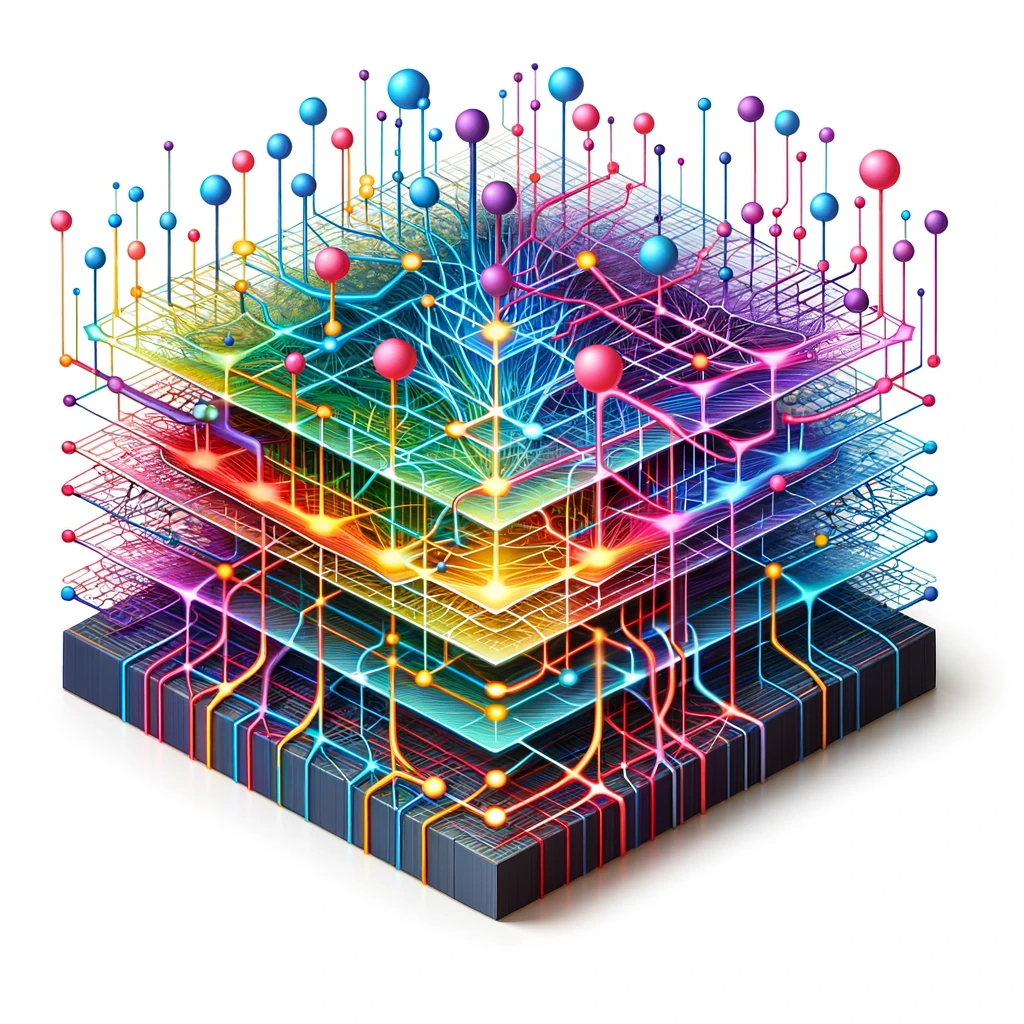

AI models do not store personal user data. Instead, they store information about the tasks they have been trained on. This information is typically stored in the form of weights, which are numerical values that represent the connections between different neurons in the model.

The weights of an AI model are constantly being updated as it learns from new data. This is how AI models are able to improve their performance over time.

Learning from Mistakes

AI models learn from mistakes in a similar way to humans. When an AI model makes a mistake, it is corrected by its training data. This process helps the model to learn from its errors and improve its performance over time.

For example, if an AI model is trained to generate text, it may initially generate text that is grammatically incorrect or factually inaccurate. However, as the model is corrected, it will learn to generate text that is more accurate and grammatically correct.

Storage and Limits

AI models store their knowledge and capabilities in their architecture and weights. This information is stored on servers, which have a limited amount of storage space.

This means that there is a limit to the amount of information that an AI model can store. However, the storage capacity of servers is constantly increasing, so this limit is becoming less and less of a problem.

Hardware Side

AI models require substantial computational power, both for training and inference (making predictions or generating responses). This is because AI models need to be able to perform a large number of calculations simultaneously.

AI models are often run on specialized hardware called GPUs (Graphics Processing Units) or TPUs (Tensor Processing Units). These are like super-powered versions of the CPU in your computer, optimized for the heavy calculations that AI models require.

Companies like OpenAI use massive computing clusters – banks of interconnected GPUs/TPUs – to train their most advanced models.

Human Brain vs. AI

The human brain and AI operate very differently. While both can process information and “learn,” the mechanisms are distinct.

The human brain is a complex network of neurons, with chemical and electrical processes. AI models, on the other hand, use mathematical operations and are bound by their architecture and the data they’ve been trained on.

In essence, while AI models like ChatGPT are powerful and can handle vast amounts of information, they’re not conscious, don’t have memories of past interactions, and operate within the confines of their training and architecture.

Conclusion

AI models like ChatGPT are powerful tools that can be used for a wide range of tasks. However, it is important to understand how they work and what their limitations are. AI models are not conscious and do not have the same abilities as humans. However, ongoing research in AI is making AI models more efficient, ethical, and useful for a broad range of tasks.